Completing vera.ai – All About AI-Assisted Verification

,At the end of 2025, we concluded another EU co-funded research project. Its title: vera.ai, short for "VERification Assisted by Artificial Intelligence", which already says a fair bit about the focus of this undertaking. Let's take a look.

For just over three years, we worked with a consortium of 14 partners from across Europe on pressing research questions in the disinformation detection sector. This included providing solutions to support factchecking and verification of digital content in various ways. It is important to stress: vera.ai was a research project, hence not everything was or is intended to make it straight into finished products or services. Instead, our main goal – pushing the boundaries of research in this dynamic field – was just as crucial.

Project Outcomes That Live On

A versatile team of academics and practitioners carried out a wide range of work, such as:

- Research on disinformation detection (e.g., how false information spreads through so-called "Coordinated Inauthentic Behavior" (CIB), and other activities by malicious actors).

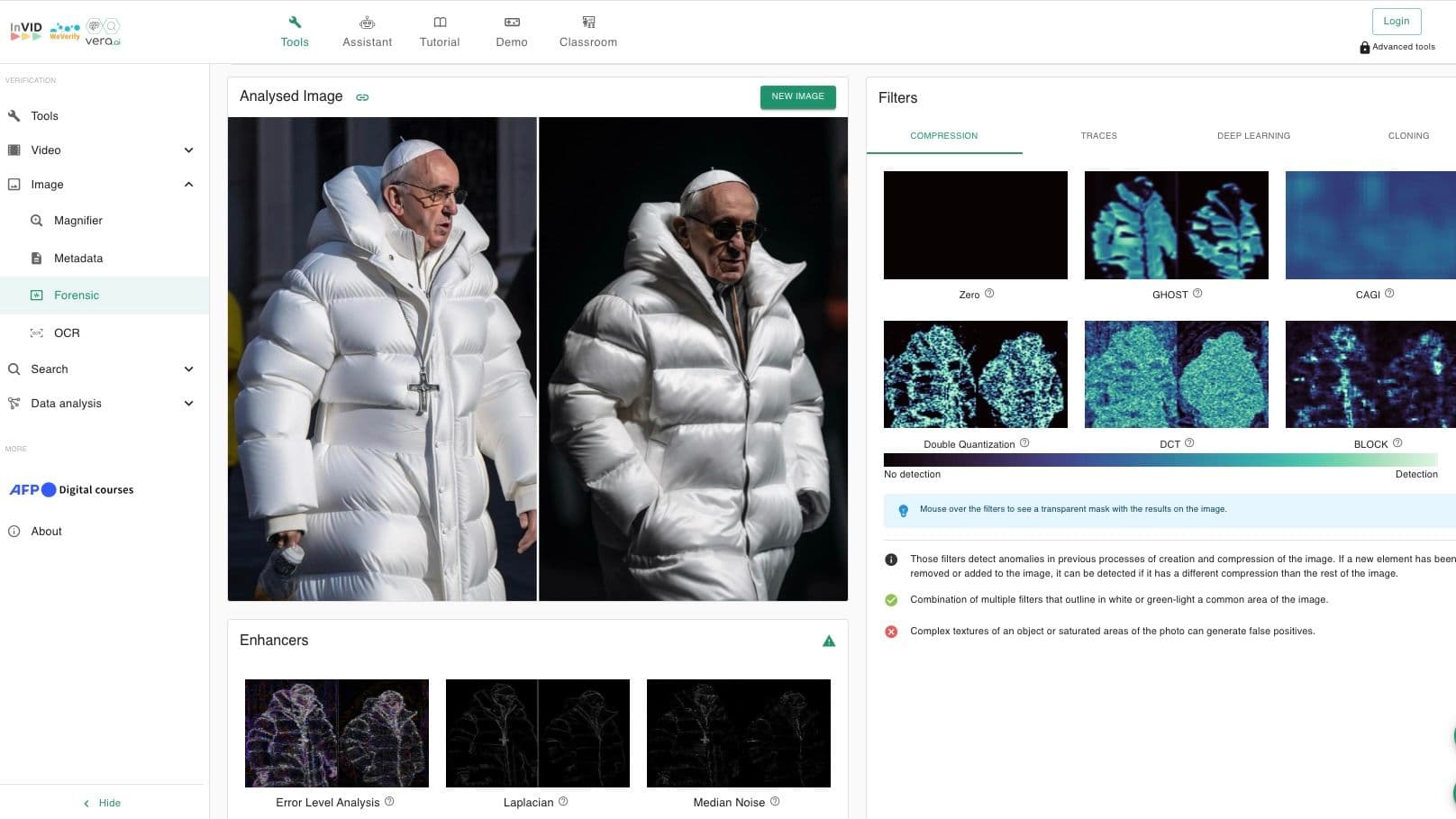

- The development of a variety of tools for content analysis of different modalities like audio, video, images, or text.

- The enhancement and improvement of already existing tools (e.g., Truly Media, co-developed and provided in a public-private partnership by Athens Technology Center and DW, and the so-called verification plug-in, a free browser extension used regularly by more than 160,000 people per week in early 2026).

- A number of knowledge bases and various repositories, most of them open source and accessible for all (see links below and the repositories on the project website and Zenodo).

In total, the project team identified 23 tools and services that were either developed from scratch within vera.ai, or further improved during its runtime. They are all listed in an article on the vera.ai website (and are also portrayed in this academic paper, among others). The former includes a corresponding table pointing to individual factsheets, providing links to the respective services, plus more information on the different results. Most of this is freely accessible and will remain so in the foreseeable future.

Additionally, the consortium made available a large number of publications and presentations of project work and outcomes. All deal with, or are related to, the topics of verification, disinformation detection and the analysis of multimodal content.

The Focus Of Our Engagement

Our role in vera.ai was as diverse as the project itself.

We did our own development work and research, e.g., in text analysis (as an illustrating example see this paper on subjectivity detection); we co-developed tools and services (a special focus here was on improving our co-owned Truly Media, and work surrounding the analysis of audio content); we led the work on dissemination (in other words: spreading the word about project work and outcomes in many forms and formats); and we had a very active role in the evaluation of the various tools, components, features and services that were developed by project partners. The latter we did in close cooperation with the European Broadcasting Union (EBU).

Evaluation And Testing: Lessons Learned

Together with the EBU, we applied a participatory design and evaluation methodology rooted in Scandinavian design practices from the 1960s. This approach emphasizes the active involvement of relevant stakeholders throughout all development stages to ensure that solutions address real user needs and align with existing workflows rather than forcing users to adapt to new ones.

In vera.ai, we conducted design workshops and evaluation sessions with more than 50 participants from journalism, design, and academia. Contributors came from across the globe – from Australia to Mexico and numerous European countries. Their input and respective evaluations paid in to the vera.ai tools and services (e.g., by improving usability), while also providing our team with increased knowledge and insights about evaluation methods and approaches.

In particular, we learned the following:

- Participatory design and evaluation are a valuable addition to our methodological skill set.

For us, collaborating with an EBU colleague who is an expert in this methodology allowed us to broaden our methodological expertise and apply participatory design principles in other research projects. - Placing users at the center is critical when designing AI tools for domains that cannot afford to make mistakes – such as journalism.

Working closely with journalists helped us to ensure that the tools support existing workflows. It also taught us what drives trust – an essential prerequisite for adoption. Sharing these insights with technical partners enabled targeted improvements of the tools. - Trustworthiness is a central user requirement for AI-based services and must be embedded in the design from the outset.

Making trust a design principle helps users better understand, evaluate, and ultimately rely on AI-driven services. We synthesized these findings from our evaluation activities in a paper that provides actionable guidance for the technical development process.

Looking Back – And Ahead

In a way, the end of vera.ai marked "the end of an era" for us. It was the continuation of a series of EU co-funded projects in which we participated and that started with InVID (2016-2018), followed by WeVerify (2019-2022), and culminated in vera.ai (2022-2025).

These projects, along with other national or EU-funded initiatives that we have participated in previously, have had a huge and very positive impact on individual team members and our team as a whole. They allowed us to get to – and stay – at the cutting edge of developments in this fast-changing sector, trying our best to feed know-how, knowledge, tools and approaches to other parts of Deutsche Welle (e.g., to the Fact-checking team or other technical units). Furthermore, activities and projects such as vera.ai allow us to further extend our network in the disinformation detection domain – in the academic, professional, and political sector.

Though vera.ai is now over, it lives on in its results and outcomes, as documented on the project website, and we will continue to be an active player in this field. Thankfully, several new projects focusing on countering disinformation are set to begin later in 2026 – in addition to those running already, like news polygraph and AI-CODE. So, keep watching this space!

Edited by: Olivia Stracke